Events

LLMs for PMs: From Chatbots to Coworkers

AI adoption in Product Management has moved fast - from experimentation in 2024, to everyday usage in 2025, and now into a new phase in 2026. This talk explores the shift from standalone AI tools to agentic workflows, explains how we got here, and focuses on what this change means for PMs who use AI to augment their own work. You’ll leave with a clear mental model of the 2026 AI landscape and how Product Managers can operate effectively within it.

6 min read

Svyatoslav Hvozdynskyy · Product Manager · Presented at Product Tank Warsaw, 04.02.2026

Slides - LLMs for PMs: From Chatbots to Coworkers

The PMs who win in 2026 won't have better prompts. They'll have better systems.

Here's a question that's been nagging me: if every PM now has access to the same AI models, the same tools, the same capabilities — where does the advantage come from?

Not from the model. Not from the prompt. The advantage comes from the system of work you design around it.

I've spent the last two years building AI products in enterprise environments and — like most of you — obsessively experimenting with every new tool along the way. What I've noticed is that the conversation in our community has shifted three times in rapid succession, and most PMs are still operating in the mindset of the previous phase.

Let me explain.

Three phases, three mindsets

In 2024, AI was a toy. A fascinating, addictive toy — but a toy. We prompted chatbots, got surprisingly good outputs, and shared screenshots with colleagues. Every week brought a new tool. Every morning, a new prompt trick. It was the honeymoon phase, and it was fun.

In 2025, AI became infrastructure. It moved into the mundane parts of the job — writing docs, drafting comms, generating prototypes, summarizing meetings. The novelty wore off. What replaced it was something more valuable: habit. AI became a workhorse, and the PMs who embraced it gained real speed advantages.

The mantra of that era was: "AI won't replace PMs, but PMs using AI will."

Fair enough. But that framing has a ceiling. Being faster at producing artifacts is useful, but it's not transformative. The hard problems in product management were never about typing speed. They were about understanding users, testing assumptions, and making good decisions under uncertainty.

Which brings us to 2026 — and the shift that actually matters.

The real question AI should answer isn't "how do I write this faster?"

It's: "What's actually worth building?"

Think about where you spend your time as a PM. A significant chunk goes to production — documents, tickets, slides, specs. AI has already compressed that work dramatically. But the highest-leverage activities — discovery, prioritization, strategic thinking — remain largely untouched. We're optimizing the assembly line while the real bottleneck is upstream.

The PMs I admire most aren't the ones producing more artifacts. They're the ones who've redirected their freed-up time toward better learning loops: faster research cycles, tighter feedback integration, more rigorous assumption testing.

That's the real dividend of AI adoption. Not more output. Better input to decisions.

Then agents arrived and changed the game

Somewhere in the middle 2025, I hit a wall. I stopped chasing every new model and protocol — not from lack of interest, but from cognitive overload. The pace of releases was simply indigestible.

And then something happened that didn't feel like another incremental upgrade. AI agents emerged — and they changed the fundamental interaction model.

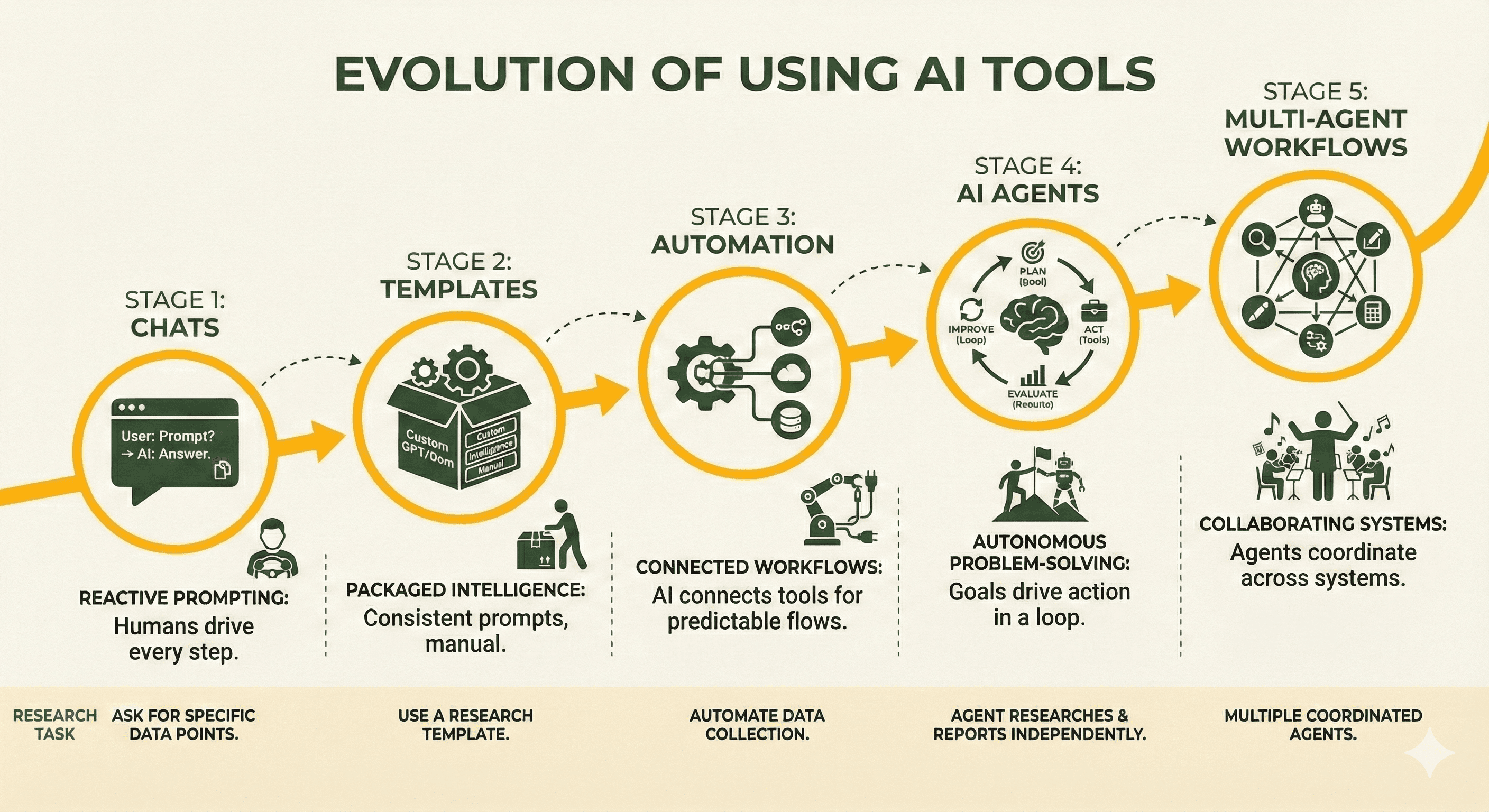

To understand why this matters, consider how our relationship with AI tools has evolved:

Stage 1 — Chat. You ask, it answers. You copy-paste, you decide. This is where most people still live.

Stage 2 — Packaged intelligence. Custom assistants, reusable templates, saved contexts. Think CustomGPTs or Gemini Gems. More consistent, but still entirely manual.

Stage 3 — Automation. AI connected to workflows and external tools. Reliable for predictable processes. Brittle when judgment is needed. This is where platforms like N8N found their audience.

Stage 4 — Agents. Systems that don't just respond — they reason, plan, select tools, and iterate. They decide what to do next, not just what to say.

Stage 5 — Multi-agent systems. Multiple specialized agents coordinating across tools, data sources, and workflows to accomplish complex goals.

The critical shift across this arc: we went from asking for answers to giving goals.

That's not a feature upgrade. That's a paradigm change.

What this looks like in practice

Let me make this concrete.

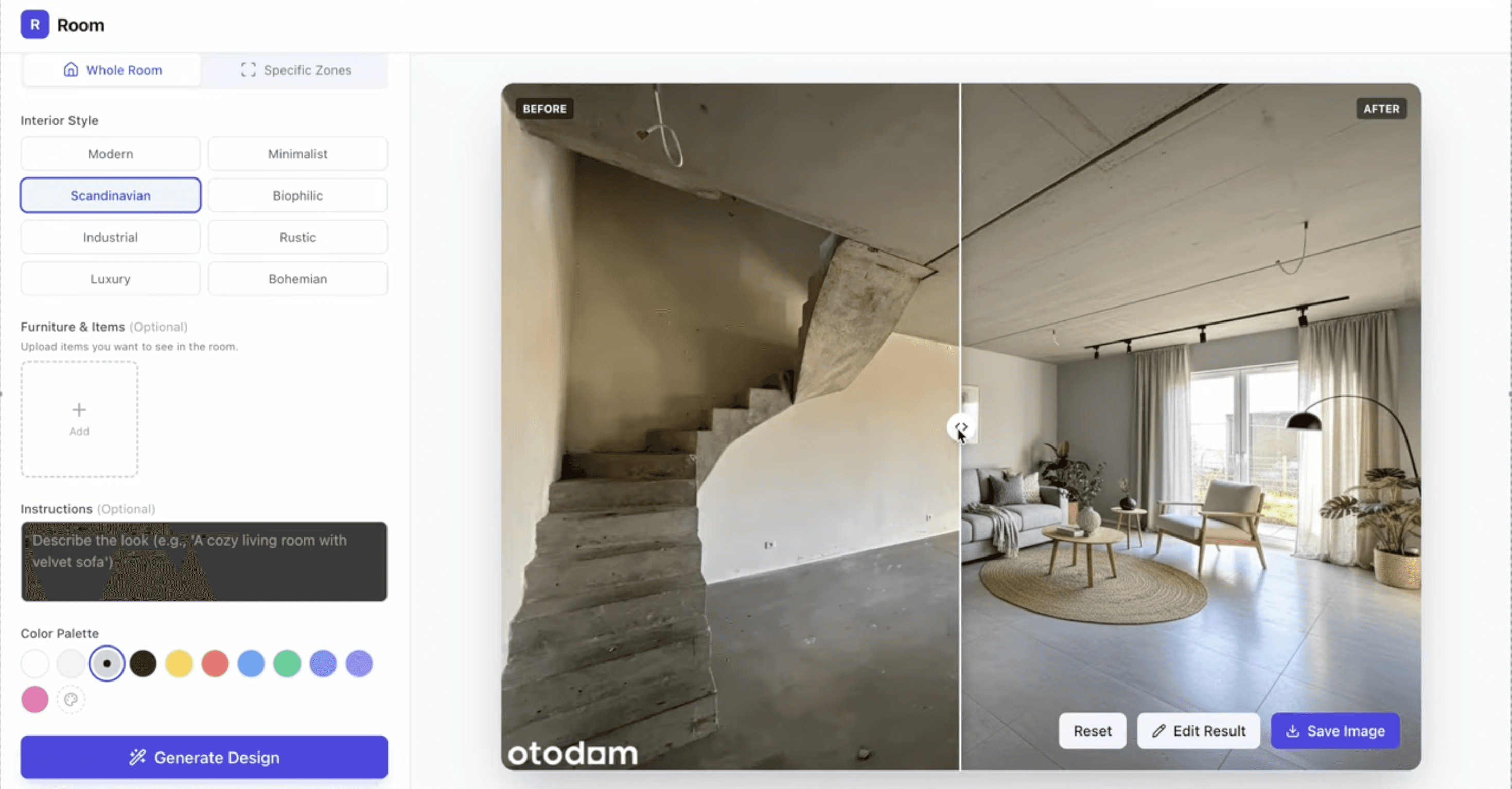

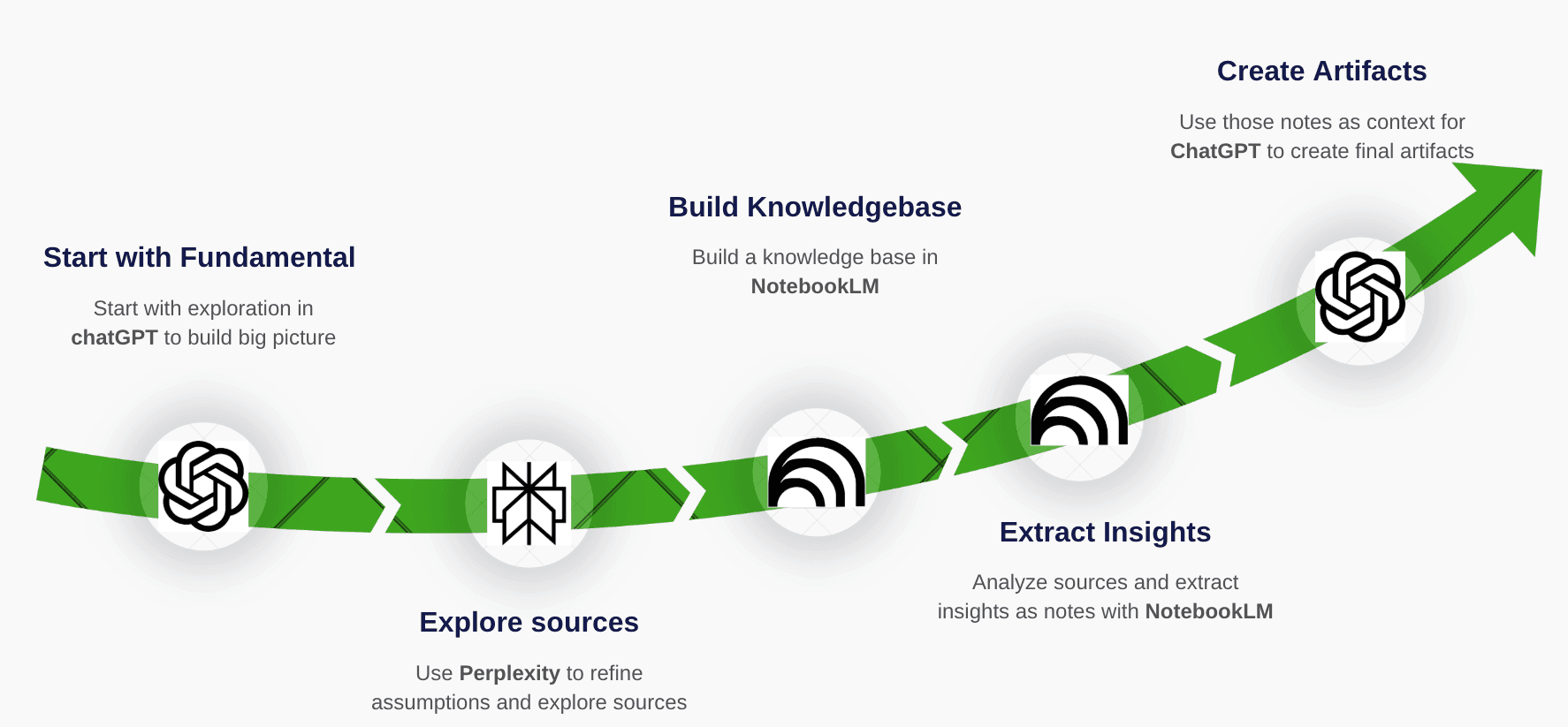

In 2024, my research workflow was a four-step manual chain: explore a topic in a chatbot, verify facts with Perplexity, build a knowledge base in NotebookLM, synthesize a final document back in the chat. Each step required my attention and my hands. It worked, but it was slow.

Then Deep Research launched — first from Google, then OpenAI and Perplexity released competing versions within weeks. And suddenly, my entire workflow was obsolete.

Here's what changed: instead of orchestrating four tools manually, I described a goal. The agent planned the research steps, searched autonomously, evaluated source quality, adjusted its strategy when results were thin, and delivered a structured report with citations. No hand-holding. No tab-switching.

That's not better search. That's delegation.

And what startled me most: what felt groundbreaking in December felt routine by March. The speed of normalization is itself a signal PMs need to pay attention to.

Why coding agents matter for non-technical PMs

Here's where it gets interesting — and where I see most PMs missing the plot.

Coding agents (Claude Code, Gemini CLI, Cursor, and others) are exploding in popularity. And the instinctive PM reaction is: "That's for developers, not for me."

Wrong framing.

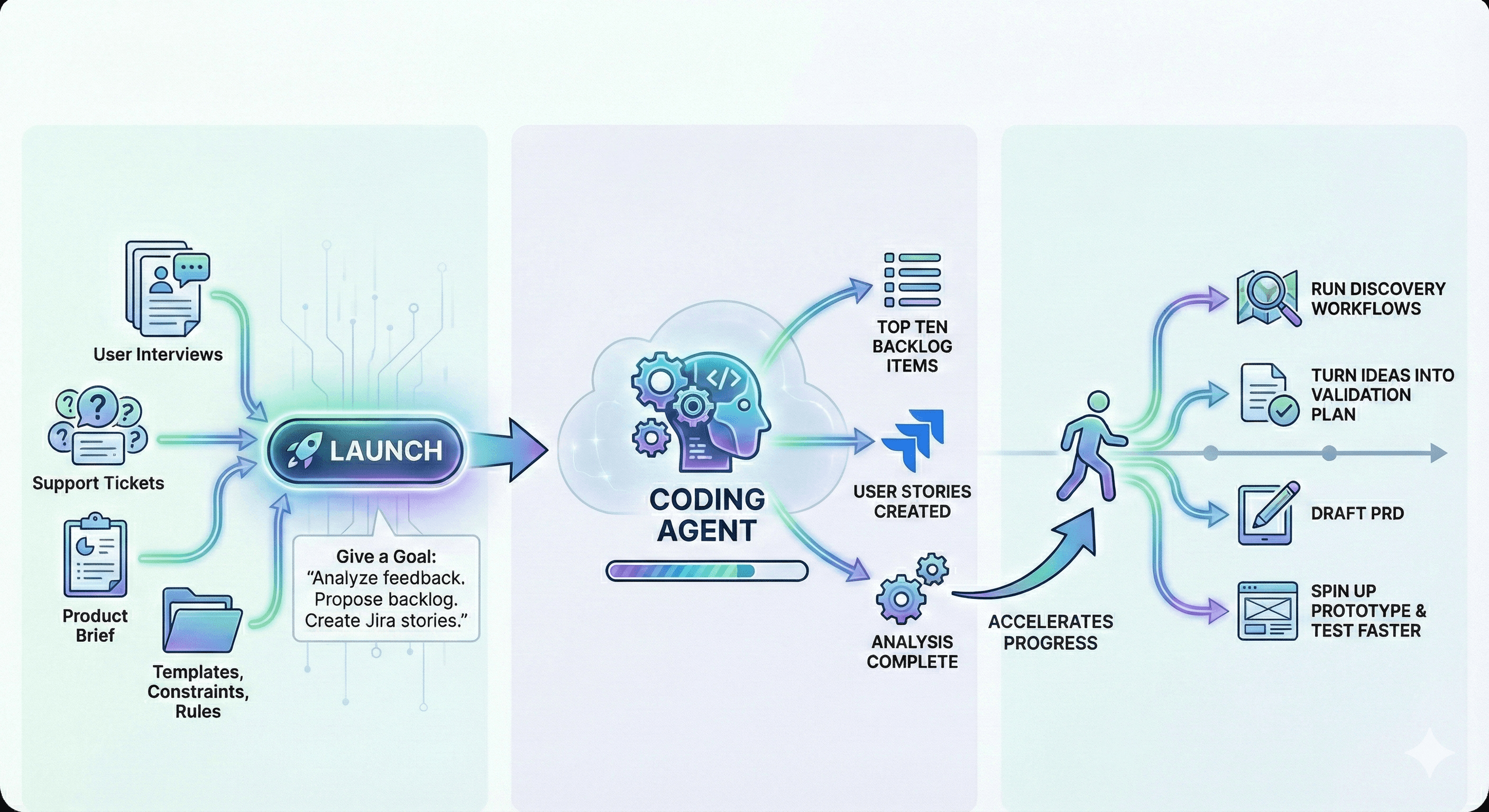

The pattern these tools embody — files as context, an editor as workspace, AI that can plan across multiple sources and act autonomously — is a general-purpose workflow pattern. It just happens to have arrived in developer tooling first.

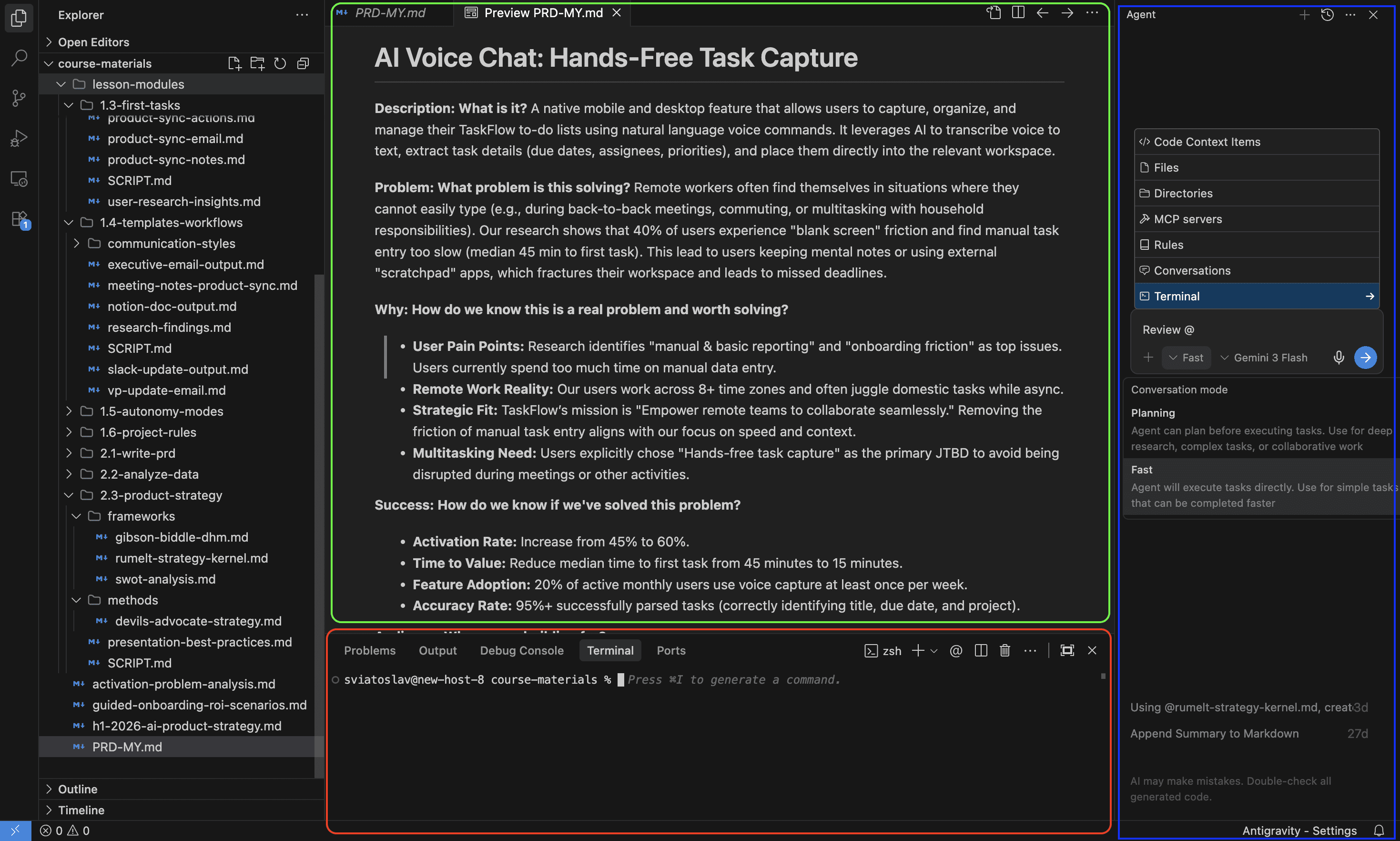

Now imagine applying it to PM work. A project folder contains user interviews, support tickets, a product brief, and a set of files defining your templates, constraints, and decision criteria. You give a goal: "Analyze this feedback. Propose ten prioritized backlog items using this framework. Draft user stories. Push them to Jira."

You launch it. While it runs, you move on to something else — reviewing a PRD, preparing for a stakeholder meeting, running a different discovery workflow.

The agent delivers structured output. You review, refine, and decide.

This isn't science fiction. This is the workflow emerging right now. And it changes something fundamental about the PM role: instead of walking into alignment meetings with opinions, you walk in with evidence, options, and documented trade-offs.

Not because you're working harder. Because you're orchestrating better.

The trap I fell into (and you will too)

I have to be honest about a mistake, because it's the trap waiting for every PM who gets excited about agents.

The first time I let an agent synthesize user research end-to-end, the output was impressive. Confident tone. Clean structure. Strong recommendations. It sounded right.

It wasn't. The summary was optimized for coherence, not truth. It smoothed over contradictions in the data, downplayed edge cases, and presented uncertain signals as validated insights. If I'd shared it without scrutiny, I would have misled my team.

That was my wake-up call. Speed without judgment doesn't create leverage. It creates risk.

Agents are powerful, but they're not accountable. You are. The PM's job in an agentic workflow isn't to press "go" and trust the output. It's to define the rules, set the constraints, design the verification steps, and take responsibility for the result.

The "10x PM" myth

This brings me to a phrase I keep hearing: "10x PM." And I think it's dangerous.

If "10x" means shipping ten times more tickets, you haven't become more effective. You've just accelerated the treadmill. More output without better judgment is just more noise.

AI doesn't make you 10x by helping you do more. It makes you 10x by changing what's possible — deeper research in less time, faster validation cycles, richer context for every decision, more rigorous testing of assumptions you'd previously have shipped on gut feel.

A real 10x PM improves two things: the rate of learning and the quality of decisions. Everything else is vanity metrics.

The PM as orchestrator

So what does the PM role actually look like in 2026?

Less like a backlog owner. More like an orchestrator.

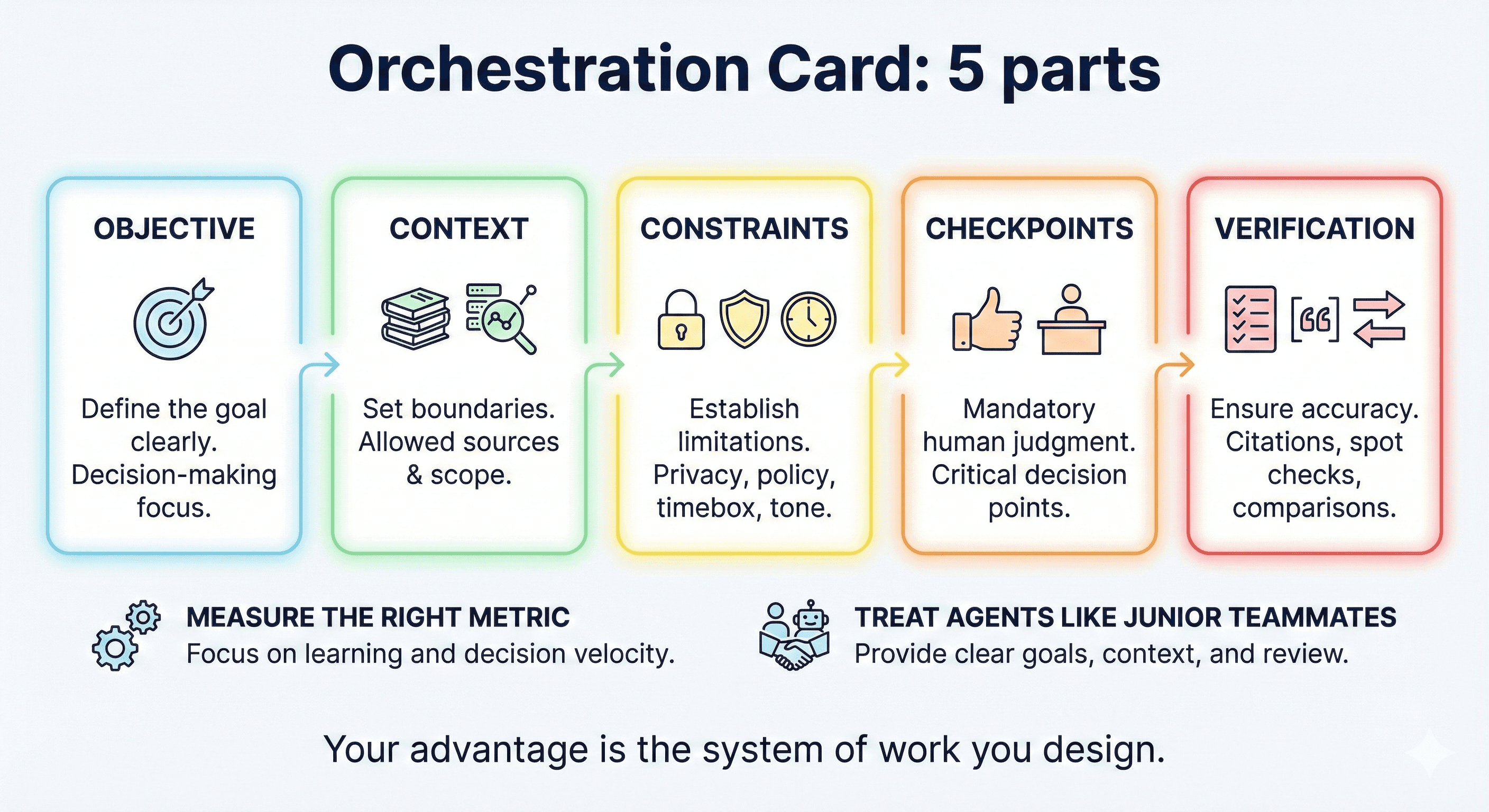

Your team has expanded — you now coordinate humans and agents. The work shifts from doing tasks to designing workflows: setting objectives, providing context, defining constraints, deciding where human judgment is non-negotiable, reviewing outputs, making trade-offs, and keeping the whole system aligned to outcomes.

This is a responsibility upgrade, not just a tool upgrade. Orchestration requires a kind of judgment we haven't had to exercise before: when to trust, when to verify, what stays human, and what can be safely delegated to a machine.

And here's the uncomfortable truth that most organizations aren't ready for: you can't orchestrate agents effectively without clear policies on data access, security, compliance, and ownership. The blockers to agentic adoption aren't primarily technical. They're organizational. And PMs who can navigate that ambiguity — bridging the gap between what's technically possible and what's organizationally feasible — will be exceptionally valuable.

A playbook to start with

If this resonates but feels abstract, here's something you can try this week.

Pick one workflow. Choose the one that eats the most time, or the one you dread. Research synthesis, competitive analysis, backlog grooming — whatever it is, pick one.

Write an orchestration card. Five components:

Objective — What decision does this workflow serve?

Context — What data sources are in scope? What's off-limits?

Constraints — Privacy rules, tone guidelines, timeboxes, definitions of done.

Checkpoints — Where must a human review before the process continues?

Verification — How do you validate the output? Citations, spot checks, cross-references.

Measure what matters. Not speed. Not volume. Decision quality. Learning velocity. Time-to-insight. If your metrics only capture output, you'll optimize for the wrong thing.

Treat agents like junior teammates. Clear goals. Rich context. Mandatory reviews. Direct correction when they're wrong. Standards that evolve as you learn what works.

This isn't a one-time setup. It's an iterative design practice — and it is the new PM craft.

The question worth sitting with

In 2024, we learned to prompt. In 2025, we learned to adopt. In 2026, we learn to orchestrate.

The question isn't whether PMs will use AI. Every PM will. The question is whether you'll use it like a faster keyboard — producing the same work at higher speed — or whether you'll redesign how learning and decisions actually happen on your team.

The PMs who thrive won't be the ones with the best prompts or the earliest access to new models. They'll be the ones who think most clearly about the system around the tool — the objectives, the constraints, the checkpoints, the accountability.

The model is a commodity. The system you build around it is your edge.

That's the work now. That's the craft.

View more articles

Learn actionable strategies, proven workflows, and tips from experts to help your product thrive.