Experiments

From Browsing Apartments to a Prototype

A tiny moment of friction while browsing real-estate listings turned into a weekend rabbit hole, a working prototype, and a set of product questions I can't stop thinking about.

The Moment

I Couldn't See What a Room Could Become. So I Built a Tool That Shows You.

Where do your best product ideas come from?

For me, inspiration rarely strikes at the desk. It usually shows up in the most ordinary moments. As a product manager, I've developed this professional deformation where everyday life turns into an analysis session. At restaurants, I end up calculating unit economics while waiting for my coffee. In shops, I catch myself sizing up pricing strategy and throughput. Not the most relaxing hobby — but it's become instinct.

And yesterday, it happened again.

I was browsing real-estate listings on Otodom — Poland's dominant property marketplace, with 23.5 million monthly visits and a near-monopoly on the market — and I kept running into the same wall: "I can't really see what this place could become. Why is that still so hard?"

The listing photos showed bare walls, dated kitchens, empty rooms with bad lighting. Some had furniture from the 1990s. Others had no furniture at all. Every listing was asking me to do the same thing: imagine. And I'm not good at imagining empty rooms. Most people aren't.

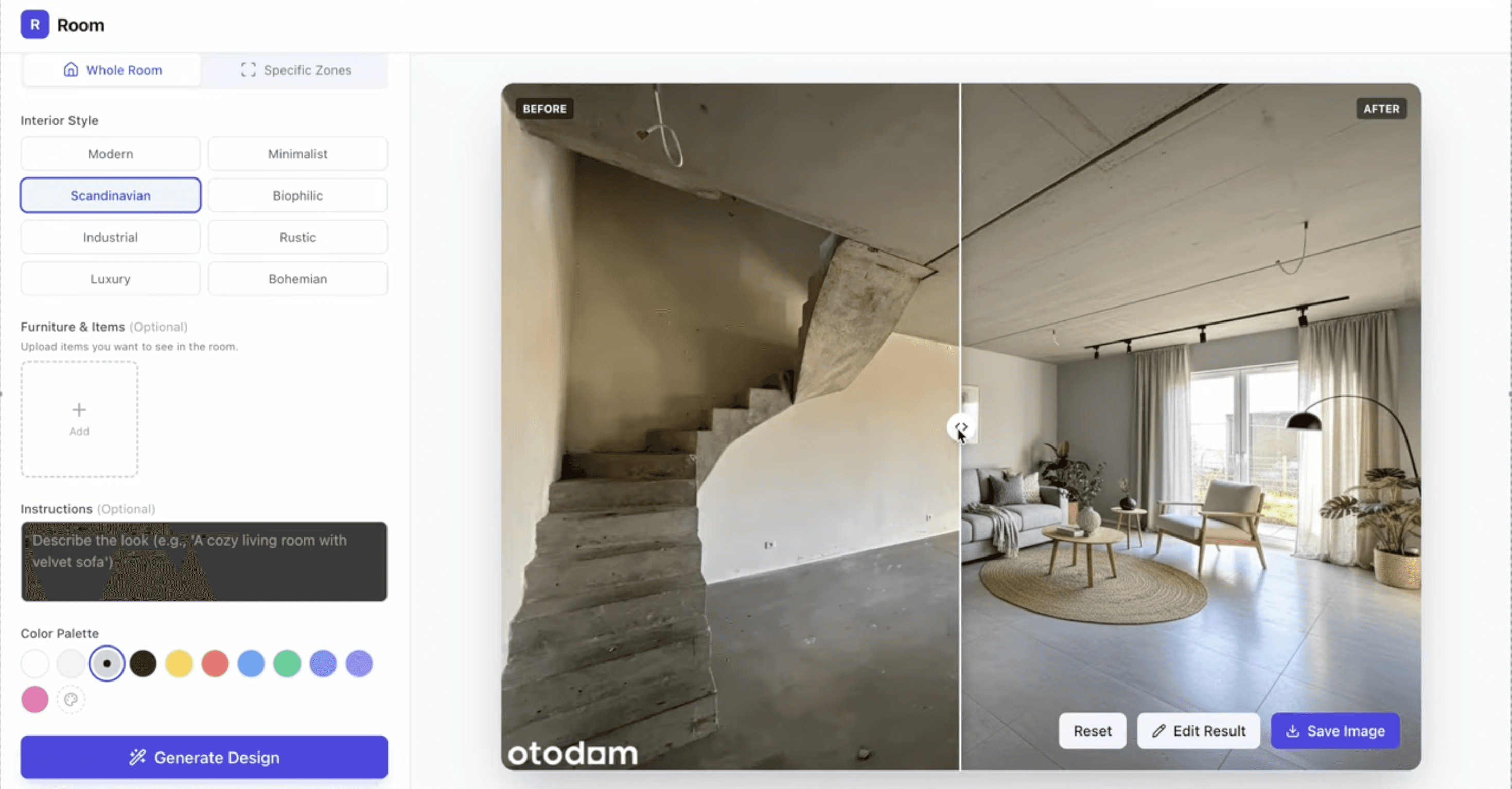

That tiny moment of friction was enough to trigger a weekend rabbit hole. I opened my laptop, and a few hours later I had a working prototype — an AI tool that lets you upload a photo of any room, pick a style, and instantly see a realistic concept of what it could look like after renovation.

From virtual staging to redecorating, decluttering, exterior redesigns — the use cases kept multiplying as I explored the problem. What traditionally required a designer, a budget, and days of turnaround started happening in seconds.

And that's when the product manager instinct kicked in properly. Not "this is cool technology" — but "is this a real problem, who else is solving it, what would it take to make it work at scale, and where does it break?"

Let me walk through what I found.

The Market Is Real — and Bigger Than I Expected

My first instinct was to check whether I'd stumbled into a crowded space or an empty one. The answer: crowded, growing fast, and still surprisingly underserved.

The virtual interior design AI market was valued at $1.52 billion in 2024 and is projected to reach $5.65 billion by 2029, according to a ResearchAndMarkets global report. That's nearly a 4x increase in five years, driven by digitalization in architecture and design, growing consumer interest in home visualization, and the collapse in cost of AI image generation.

The traditional staging comparison makes the opportunity tangible. Physical home staging costs $2,000 to $8,000+ for a three-month contract and requires 7 to 14 days of setup. AI virtual staging costs between $0.30 and $5 per image and delivers results in seconds. That's not a marginal improvement — it's a 97% cost reduction with turnaround compressed from weeks to moments.

The data on impact is equally compelling. According to the National Association of Realtors, 81% of buyers say staging helps them visualize a property as their future home. Virtually staged listings sell 75% faster than those without visual enhancements. Staged homes sell for an average of 5-9% more than comparable unstaged properties.

This isn't a nice-to-have. It's a measurable revenue driver for every party in the transaction.

The Competitive Landscape: Dozens of Tools, No Clear Winner (Yet)

Once I started mapping the existing players, the list got long fast. REimagineHome, Collov AI, RoomGPT, Virtual Staging AI (acquired by Zillow), Interior AI, Home Designs, Stager, HOQI, Remodeled AI, Ideal House… And that's just the dedicated platforms — not counting the designers using GPT-Image-1, Midjourney, Gemini Nano Banana or Flux directly.

HousingWire's 2026 virtual staging guide assessed the space and concluded that AI staging has reached a quality tipping point: the outputs are now photorealistic and stylish, not the surreal artifacts of two years ago.

But here's what struck me as a product person: despite the volume of tools, the market is fragmented. Most of these products are point solutions with narrow feature sets and undifferentiated positioning. Many use the same underlying models. The user experience across the category is inconsistent — some tools can't handle large images, others produce results that require multiple re-rolls, and very few handle the full workflow from room scan to styled output to compliance-ready export.

There's room. The question is what kind of room.

Zillow Already Made Its Move

If you're wondering whether major platforms are paying attention to this space, the answer arrived in October 2024. Zillow acquired Virtual Staging AI (VSAI), a company co-founded by Michael Bonacina and Mikhail Andreev out of Harvard Innovation Labs. The acquisition closed on October 7, 2024, and the Munich-based VSAI team joined Zillow's AI division.

In September 2025, Zillow launched the integrated product: AI-powered Virtual Staging built directly into Zillow Showcase, their premium listing experience. Buyers viewing listing photos can now tap a button, choose from styles like modern, Scandinavian, farmhouse, or luxury, and see rooms digitally restaged in real time. They can slide between views to compare the original and staged versions. The staging also works in reverse — removing existing furniture to show the room as a blank slate.

Josh Weisberg, Zillow's SVP of AI, positioned it as bringing the company's AI strategy to life for both consumers and agents. Amanda Pendleton, Zillow's home trends expert, framed the core insight precisely: "Too many buyers overlook what could be the perfect home for their family simply because they can't see past the furniture or design choices."

That's the exact friction I felt while browsing Otodom.

Zillow's integration matters because it proves the pattern: virtual staging is moving from standalone tool to embedded platform feature. It's not a product category that exists independently — it's a capability that gets absorbed into the surfaces where buyers and sellers already spend their time.

What About Otodom?

This is the question that interests me most as someone based in Poland. Otodom is owned by Prosus (through OLX Group), operates as Poland's number-one real-estate platform, and serves as the first stop for virtually every property search in the country. Their analytics platform tracks the entire Polish housing market. Their audience skews 25-34, which is exactly the demographic most comfortable with AI-enhanced experiences.

But as of now, Otodom doesn't offer native virtual staging or AI room visualization. The platform has invested in 3D tours (lending cameras to brokers) and analytics. It hasn't yet made the Zillow-style move into AI-powered visual transformation.

Will they? The logic is compelling. Zillow demonstrated that embedding AI staging directly into listings increases buyer engagement and agent conversion. Otodom has the audience (23.5 million monthly visits), the listing data, and the parent company resources (Prosus) to build or acquire the capability. The question isn't whether the feature makes sense — it's whether Otodom moves before third-party tools capture the workflow.

If I were a PM at Otodom, I'd be watching Zillow's Showcase metrics closely. And I'd be prototyping this quarter.

From Observation to Prototype: What the Build Taught Me

Let me walk through what it actually took to go from "I can't see what this room could become" to a working tool. Not the technical details — the product decisions.

The core interaction is deceptively simple. Upload a photo. Pick a style. See the result. Three steps. But each step hides significant complexity. The upload needs to handle varying image quality, angles, and lighting conditions. The style selection needs to be intuitive but expressive enough to produce meaningfully different outputs. And the result needs to feel believable — not perfect, but credible enough to trigger the emotional response of "I could live here."

Style selection is a design challenge, not a technology challenge. The models can generate almost any aesthetic. The hard part is curating the options so they're useful rather than overwhelming. Most tools offer 8-20 presets (modern, Scandinavian, farmhouse, coastal, mid-century, industrial, luxury, etc.). But the styles that matter depend on the market. A Polish apartment buyer likely doesn't need "coastal grandmother." They might need "modern Polish" or "Warsaw loft." Localization of style categories is an underexplored differentiator.

The "renovation preview" use case is different from "virtual staging." Staging adds furniture to empty rooms for marketing purposes. Renovation visualization changes structural and material elements — floors, walls, cabinets, fixtures — to show what a space could become after investment. The technical challenge is harder (you're modifying the room itself, not just placing objects in it), the user intent is different (decision support, not marketing), and the stakes are higher (people spend real money based on renovation estimates). Most tools blur these two use cases. Separating them cleanly is a product opportunity.

Speed matters more than perfection. In my prototype testing, I found that users don't need pixel-perfect accuracy. They need emotional plausibility — a result that's good enough to trigger a reaction of recognition: "Yes, this is the direction." The design process is iterative by nature. If the first generation takes 3 seconds and gets the user 70% of the way there, that's more valuable than a perfect render that takes 30 seconds. Speed enables iteration, and iteration enables decision-making.

The "before and after" is the product, not the "after" alone. The most powerful moment in every test wasn't seeing the styled room — it was the comparison. Sliding between the original photo and the transformation. That delta is what creates emotional engagement and drives sharing. Every AI staging tool should be built around the comparison experience, not just the generation.

The Hard Problems Nobody's Talking About Enough

Building a weekend prototype is one thing. Building a sustainable product in this space is another. Here are the challenges that keep me thinking.

Consistency Across Rooms

A real apartment has multiple rooms. If you restyle each room independently, you get a living room that's Scandinavian, a kitchen that's industrial, and a bedroom that's mid-century. The AI doesn't know the rooms are connected. Maintaining a coherent design language across an entire property — consistent color palettes, material choices, furniture family — is a coordination problem that current per-image tools don't solve. Whoever cracks multi-room consistency will have a meaningful competitive edge.

Structural Fidelity

Current image generation models are creative. Sometimes too creative. They'll add windows where there are none, remove load-bearing walls, change room dimensions, or alter ceiling heights. For staging purposes, this is a cosmetic annoyance. For renovation visualization, it's a trust-destroying error. If I'm using a tool to evaluate whether to buy an apartment that needs renovation, I need the walls, windows, and proportions to stay fixed. Only the surfaces, materials, and furnishings should change. This constraint requires specific model tuning or post-processing, and most tools don't enforce it rigorously enough.

The Disclosure and Regulation Problem

California's Assembly Bill 723, signed into law and effective January 1, 2026, just established first-in-the-nation disclosure requirements for digitally altered real estate images. Any listing photo that's been AI-modified must carry a clear disclosure statement, and the agent must provide access to the original, unaltered image. Violation is classified as a misdemeanor.

This is significant for the entire category. AB 723 distinguishes between standard photographic edits (brightness, color correction, exposure) — which are exempt — and digital alterations that add, remove, or change physical elements like furniture, fixtures, landscaping, or views — which require disclosure.

The regulatory direction is clear: AI-generated images in real estate will require transparency. Products that build compliance into the workflow — automatic watermarking, side-by-side original/altered exports, disclosure-ready metadata — will have an advantage over tools that treat regulation as someone else's problem. European markets will likely follow with their own frameworks, and any product targeting Polish or EU markets should be watching closely.

Quality Control at Scale

AI image generation is probabilistic, not deterministic. The same input can produce wildly different outputs across runs. For a consumer tool where the user is experimenting, this variability is acceptable — even fun. For a B2B tool serving real estate agents who need reliable, professional results on every listing, consistency is non-negotiable.

This means building quality filtering (rejecting outputs that fall below a threshold), offering easy re-generation, and potentially allowing manual touch-up or guided editing on top of AI-generated results. The best products in this space will blend AI speed with human-in-the-loop polish.

The Furniture Commerce Opportunity (and Its Complexity)

There's an obvious monetization play: if you can show someone their room with a new sofa, link them to where they can buy that exact sofa. Several tools are exploring this — aiStager extracts real product dimensions and renders actual furniture to scale. The logic is a visual search funnel: see the room, love the sofa, buy the sofa.

But the integration is harder than it sounds. You need real-time product inventory data, accurate dimensional rendering, affiliate or commerce relationships, and a user flow that doesn't break the visualization experience. Wayfair's Decorify launched in 2023 attempting this exact play — reimagining spaces with purchasable items. It's an elegant idea that requires robust execution.

Why This Problem Keeps Pulling Me In

This started as a moment of friction on a property listing. It turned into a weekend build. And now it's turned into a deeper exploration of a market I didn't expect to be this interesting.

What fascinates me isn't the technology — AI image generation is impressive but increasingly commoditized. What fascinates me is the product problem.

Because visualization is one of those rare friction points that sits at the intersection of emotion and economics. When a buyer can't see what a home could become, they pass. When they can see it, they bid higher. The delta between "can't imagine" and "can see it" is measurable in thousands of dollars per transaction, millions across a marketplace.

And the product surface is still being defined. Is this a standalone tool? A platform feature embedded in Zillow or Otodom? A browser extension that overlays on any listing site? A WhatsApp bot that agents share with clients? A Canva-style editor for real estate? The form factor is as open as the technology.

What Today's AI Stack Makes Possible for PMs

Let me step back from the specific product and address the broader pattern, because that's what this experience really drove home.

I went from curiosity to insight to working prototype in a few hours. No team. No budget approvals. No design sprints. Just an observation, a question, and the current AI tool stack.

This is what's changing for product managers right now. The distance between "I noticed something" and "I can test whether it matters" has collapsed. Not for every idea — complex backend systems, regulatory-heavy products, and high-stakes applications still require full teams and proper validation. But for the broad category of "I think I see a user pain point and want to know if a solution is possible" — the time to prototype has dropped from weeks to hours.

That acceleration doesn't replace product judgment. If anything, it amplifies the need for it. When you can prototype anything quickly, the skill isn't building — it's deciding what's worth building. It's evaluating whether the friction you noticed is a personal quirk or a market need. It's checking whether someone has already solved it, how they're doing, and whether the market dynamics support a new entrant.

The prototype is the starting point. The product thinking is the work.

So... Is This Problem Worth Solving at Scale?

Here's my honest assessment after going deep.

The market says yes. $1.52 billion in 2024, projected to $5.65 billion by 2029. Zillow making acquisitions. Major real estate platforms integrating native features. Dozens of funded startups. Real, measurable impact on property sales velocity and price.

The technology says yes. AI image generation quality has crossed the "good enough to be useful" threshold. Costs are dropping. Speed is improving. Multi-modal models can now reason about spatial layouts, materials, and design coherence in ways that weren't possible two years ago.

The competitive landscape says "yes, but pick your lane." The standalone virtual staging market is getting crowded and will likely consolidate. The renovation visualization space is less mature and more technically challenging. The embedded-in-platform play (becoming the native feature for Otodom, Rightmove, or other regional portals) is the highest-leverage opportunity but requires partnership or acquisition.

The regulatory direction says "build compliance in from day one." California's AB 723 is the first signal. Europe will follow. Products that treat disclosure and transparency as core features — not afterthoughts — will have a structural advantage.

And if Otodom or other CEE real estate platforms aren't already building this... they're leaving the opportunity for someone else to capture the workflow. The buyer intent is there. The technology is there. The question is who moves first.

Where Do Product Ideas Come From?

Back to the question I started with. For me, the honest answer: everywhere except at the desk.

Browsing apartments. Standing in a queue. Waiting for coffee. Walking through a store. Each time, the same instinct kicks in — not "this is annoying" but "why is this still hard, and what would need to be true for it to be easy?"

The best product ideas I've had didn't come from strategy documents or brainstorming sessions. They came from paying attention to the friction in ordinary life and then being willing to chase the thread.

This one started with a listing photo. It led to a prototype, a market analysis, and more questions than I started with. That's how it usually goes.

Where do you find your product inspiration? During work? On walks? In the shower? While browsing apartments at midnight?

And on the specific question: do you think platforms like Otodom are already building native versions of this for release in the next few months? Because if Zillow is any signal... the clock is ticking.

View more articles

Learn actionable strategies, proven workflows, and tips from experts to help your product thrive.