Operations

GenAI in Enterprise Legal Departments

Sample report: Generative AI in Enterprise Legal Departments: Strategic Integration, Value Realization, and the Paradigm Shift in Legal Operations

GenAI in Enterprise Legal Departments

Important this is sample report for demo purpose.

Executive Summary: Investment Proposal

The integration of generative artificial intelligence (GenAI) into enterprise legal departments has crossed a critical threshold. Moving rapidly from a period of speculative experimentation into an era of rigorous, value-driven deployment, corporate counsel and legal operations professionals are fundamentally restructuring their operational architectures. As the technological and regulatory landscapes mature through 2025 and 2026, the mandate from corporate boards is no longer merely to pilot generative AI, but to harness these advanced large language models (LLMs) at scale to drive measurable return on investment (ROI). This transformation is characterized by the implementation of bespoke technological infrastructures, the aggressive repatriation of legal work previously outsourced to external law firms, and the navigation of complex, unprecedented risks concerning data privacy, intellectual property, and attorney-client privilege.

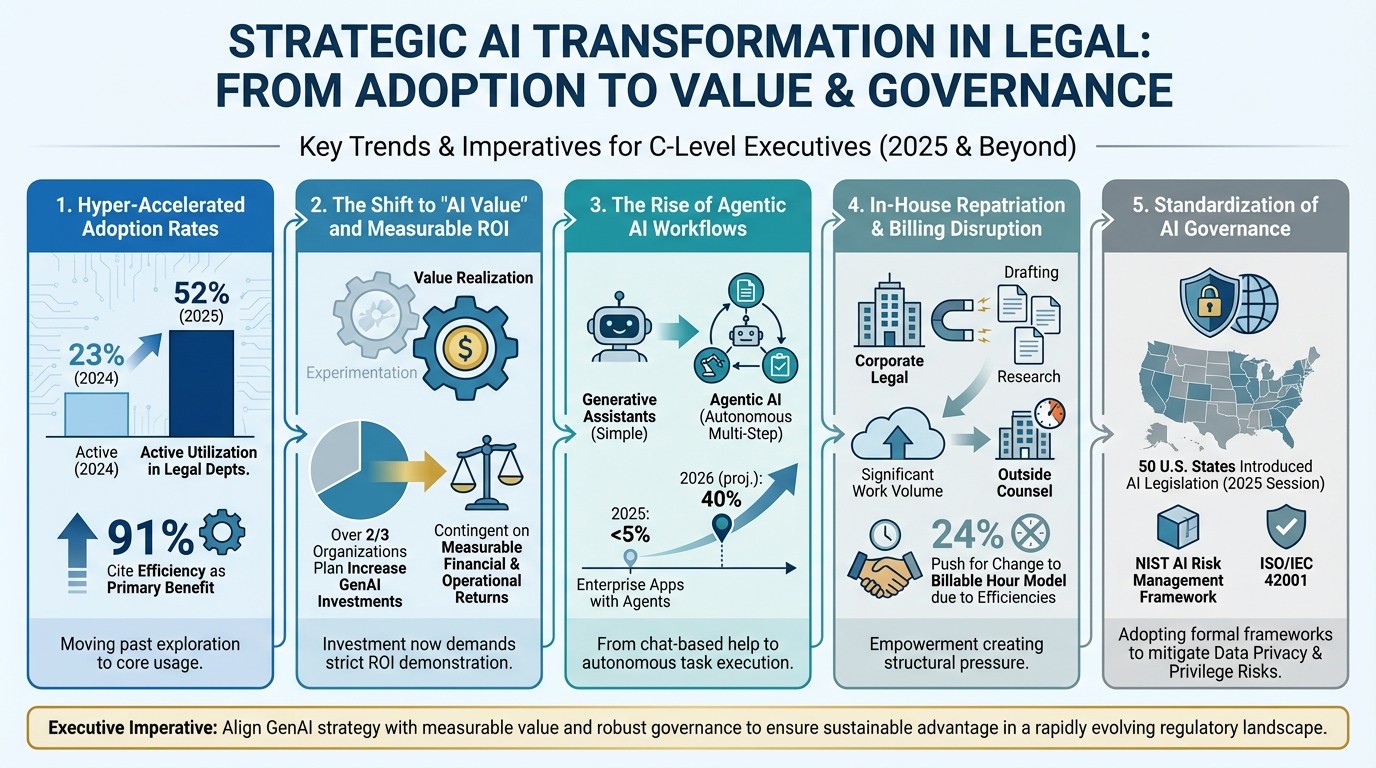

The velocity of this enterprise adoption is unprecedented within the traditionally conservative legal sector. According to comprehensive industry data, the active utilization of GenAI within corporate law departments more than doubled over a single year, rising from 23 percent in 2024 to 52 percent in 2025. Overwhelmingly, efficiency and productivity gains are cited as the primary catalysts for this integration, fundamentally altering how routine and complex legal tasks are executed. However, the transition from isolated pilot programs to enterprise-wide adoption requires navigating a labyrinth of strategic considerations. Organizations must differentiate between consumer-grade applications and professional-grade, agentic AI ecosystems; reconcile the technology with stringent, emerging regulatory frameworks like the NIST AI Risk Management Framework and ISO/IEC 42001; and confront the existential threat these efficiencies pose to traditional outside counsel billing models.

This investment proposal outlines the strategic roadmap for deploying generative AI within our legal operations. It provides a granular analysis of core operational use cases, the underlying technical architectures driving these platforms, methodologies for quantifying ROI, and the critical legal and governance frameworks required to mitigate enterprise risk. Ultimately, this document serves as the business case to secure funding for a formal Project Discovery Phase.

Major Trend Overview

The enterprise legal sector is currently navigating several converging macro-trends regarding artificial intelligence. Based on recent market data and industry analysis, the following trends define the current landscape:

Hyper-Accelerated Adoption Rates: The legal sector has moved past tentative exploration. Active utilization of generative AI within corporate law departments surged from 23 percent in 2024 to 52 percent in 2025, with 91 percent of legal professionals citing efficiency as the primary benefit.

The Shift to "AI Value" and Measurable ROI: Organizations are transitioning from a period of "AI experimentation" into an era demanding strict value realization. Over two-thirds of organizations plan to increase their GenAI investments in 2025, but continued executive support is now entirely contingent on demonstrating measurable financial and operational returns.

The Rise of Agentic AI Workflows: The technological frontier is rapidly shifting from purely generative assistants to "agentic" AI—systems capable of executing multi-step, complex workflows autonomously. Industry analysts project that 40 percent of enterprise applications will feature task-specific AI agents by 2026, up from less than 5 percent in 2025.

In-House Repatriation and Billing Disruption: Generative AI is empowering corporate legal teams to bring significant volumes of work—such as document drafting and legal research—back in-house. This is creating structural pressure on traditional outside counsel relationships, with 24 percent of legal professionals indicating they will push for a change to the billable hour model due to these new efficiencies.

Standardization of AI Governance: As the technology scales, so does regulatory scrutiny. In the 2025 legislative session alone, all 50 U.S. states introduced AI-related legislation. Consequently, legal departments are rapidly adopting formal, internationally recognized governance frameworks like the NIST AI Risk Management Framework and ISO/IEC 42001 to mitigate existential risks regarding data privacy and attorney-client privilege.

Market Urgency and the Strategic Mandate for AI Value

The landscape of legal technology in 2025 and 2026 is defined by a decisive shift from passive planning to active implementation. Industry surveys from the Association of Corporate Counsel (ACC), the Thomson Reuters Institute, and Deloitte illustrate a legal sector that is aggressively mobilizing to capture the operational efficiencies promised by generative AI.

The Statistical Acceleration of Enterprise GenAI

Data collected across global jurisdictions highlights the rapid normalization of AI within corporate law practices. Among in-house counsel, 52 percent report actively using generative AI in their daily practice in 2025, a dramatic surge from the 23 percent reported just a year prior. Simultaneously, the percentage of corporate legal professionals who are merely "passively planning" or educating themselves on the technology halved from 30 percent in 2024 to 14 percent in 2025, while outright prohibitions on AI use are plummeting.

AI Readiness Stage in Corporate Legal Departments | 2024 Adoption Rate | 2025 Adoption Rate | Year-Over-Year Trend |

Already using in legal practice | 23% | 52% | +29% (More than doubled) |

Using beta products/testing | 15% | 15% | Flat |

Researching/evaluating vendors | 22% | 17% | -5% (Maturing pipeline) |

Passively planning/educating | 30% | 14% | -16% (Shift to action) |

Not planning to use | 10% | 2% | -8% (Near total elimination) |

The broader professional services sector mirrors this trend. Thomson Reuters notes that general GenAI adoption jumped from 14 percent to 26 percent across the industry, with law firms leading at 28 percent adoption. Notably, 59 percent of law firms and 57 percent of corporate legal departments now believe GenAI should be deeply integrated into their core workflows. This optimism is primarily driven by the technology's capacity to automate routine tasks, unlock new opportunities for strategic innovation, and deliver unprecedented time savings.

The Shift from Experimentation to Measurable Value

Deloitte's 2025 predictions for in-house legal departments underscore a critical maturation in corporate strategy. While 2024 was defined by AI experimentation, 2025 marks the era of "AI value". Over two-thirds of organizations plan to increase their GenAI investments, providing legal teams with substantial executive support. However, this capital influx is contingent upon the demonstration of measurable returns.

Deloitte identifies several key shifts occurring within the market. First, achieving adoption at scale is recognized as the single biggest barrier to realizing value, requiring significant investment in foundational training and change management to drive sustained user engagement. Second, delivering real AI benefits is becoming a key differentiator for legal service providers. While law firms generated significant market noise regarding their AI capabilities in 2024, in-house teams are now demanding that these benefits flow directly to the corporate client in the form of cost reductions and enhanced service delivery. Finally, the role and skillset of the legal department are fundamentally changing. Legal operations professionals are increasingly working alongside attorneys to deliver AI-augmented services, evolving the department's role to support the safe adoption of AI across the broader enterprise.

Value Creation and Operational Transformation

The application of generative AI in corporate legal environments transcends basic text generation. Market evaluations, notably those conducted by Gartner, have systematically identified specific use cases that offer the highest business value and operational feasibility, functioning as analytical engines capable of digesting vast corpuses of unstructured data to surface actionable intelligence.

Contract Lifecycle Management (CLM) and Data Extraction

Historically, post-signature contract management and mergers and acquisitions (M&A) due diligence have been labor-intensive processes, requiring extensive human capital to manually extract and classify terms from legacy agreements. Generative AI revolutionizes this process through automated contract visibility and intelligent data extraction. By deploying custom machine learning models trained on vast legal datasets, legal departments can instantly identify, extract, and classify highly specific terms and conditions from legacy and third-party contracts, transforming static repositories into dynamic, searchable databases.

The analytical capability of GenAI extends deeply into automated contract review. The technology accelerates the negotiation cycle by identifying clauses that deviate from established organizational standards. Modern professional-grade systems execute automated redlining and provide playbook-based reviews, significantly reducing the cycle times and human review requirements that have historically bottlenecked enterprise revenue generation.

Furthermore, advanced AI systems facilitate sophisticated contract risk analysis. These tools map and score risks within agreements, offering proactive indicators of liability gaps or deviations from standard thresholds before a contract is executed. This capability ensures consistency across global enterprise negotiations and allows legal professionals to uncover potentially overlooked issues, prioritizing high-urgency tasks and mitigating risk.

Gartner's Top GenAI Use Cases | Core Mechanism & Functionality | Enterprise ROI & Business Value Metrics |

Contract Visibility & Data Extraction | Identifies, extracts, and classifies terms from legacy/third-party contracts using pattern recognition. | Enhances M&A due diligence speed; drastically reduces manual administrative burden in regulated industries. |

Automated Contract Review | Identifies deviations from standard corporate playbooks and applies automated redlines. | Accelerates sales cycles; reduces dependency on outside counsel; directly impacts business revenue velocity. |

Contract Risk Analysis | Maps and scores contractual risks based on predetermined organizational thresholds. | Ensures consistency in global negotiations; proactively flags liability gaps and compliance failures. |

Legal Intake & Triage | Analyzes natural language in written requests to categorize by risk, region, and urgency. | Optimizes resource routing; automates preliminary responses, freeing staff for complex strategic analysis. |

Document Summarization | Condenses complex legal texts, discovery files, and lease agreements into concise overviews. | Accelerates information consumption; aids in rapid, informed decision-making during litigation or review. |

Meeting Transcription & Summarization | Converts spoken discussions into written transcripts highlighting key legal takeaways. | Elevates productivity by eliminating manual note-taking; ensures accurate historical records of negotiations. |

Advanced Legal Research and Drafting Augmentation

Traditional legal research, which routinely consumes hours of attorney time, is being streamlined through AI's ability to review and synthesize vast quantities of statutory data and case law. While early iterations of GenAI struggled with "hallucinations"—inventing fictitious citations—modern, legally trained models utilize sophisticated retrieval mechanisms to provide highly accurate, cited research outputs that deeply inform strategic decision-making.

In the realm of document creation, GenAI serves as an advanced, high-speed drafting assistant. Whether crafting initial drafts of internal memos, business correspondence, or complex transactional documents, AI-powered tools generate thorough baseline documents exponentially faster than traditional methods. For example, when briefing a business team on the options for terminating a supply contract, GenAI can instantly produce a comprehensive checklist of procedural steps, jurisdictional deadlines, and a drafted notification letter ready for review. This automation is particularly critical as eDiscovery remains the largest cost in complex litigation, making it a prime target for AI-driven operational savings.

The Future of Work: Agentic AI Workflows

The technological frontier within legal departments is rapidly shifting from purely generative AI to agentic AI frameworks. While generative AI excels at isolated, single-step tasks like drafting a summary or generating an image, agentic AI acts autonomously to execute multi-step, complex workflows.

This collaboration between generative and agentic technologies allows legal professionals to move beyond isolated tasks and toward integrated, end-to-end operational processes. Industry analysts forecast a rapid acceleration in this area; Gartner predicts that 40 percent of enterprise applications will feature task-specific AI agents by 2026, up from less than 5 percent today. For instance, in case law research, GenAI summarizes the relevant cases, while agentic AI autonomously organizes the findings, cross-references and compares them across different jurisdictions, and prepares a formatted report for partner review. In contract analysis, GenAI highlights the key clauses and risks, while the agentic AI acts to compare versions, track the changes over time, update external compliance checklists, and schedule automated follow-ups via CRM integrations. This evolution toward operational intelligence promises to fundamentally redesign the end-to-end legal delivery model.

Technical Architecture: Security and Integration

The success of generative AI in a corporate legal context is entirely dependent on the underlying technological architecture. General-purpose models trained on public internet data lack the nuanced understanding required for complex legal analysis and carry unacceptable risks regarding data privacy and intellectual property. Consequently, the enterprise legal market has seen the proliferation of domain-specific AI platforms, driving a massive surge in legal tech investment.

Leading Enterprise Legal AI Platforms

The market is currently dominated by a tier of highly specialized AI tools designed explicitly to handle the rigors of corporate legal work. Platforms such as Harvey, Luminance, Lexis+ AI, Litera Kira, and Spellbook have emerged as essential, non-negotiable components of the modern legal technology stack.

Enterprise Legal AI Platform | Primary Operational Focus | Key Features and Unique Advantages | Enterprise Security Posture |

Harvey | Governance, compliance, complex contract review. | Custom-trained on legal data; tailored for massive corporate departments; generative legal analysis. | SOC 2, ISO 27001, GDPR, CCPA |

Luminance | Due diligence, bulk document review. | AI-powered pattern recognition; rapid anomaly detection across large data sets. | ISO/IEC 27001, SOC 2, DORA |

Litera (Kira) | Document drafting, contract comparison. | Machine learning for firm-specific use cases; version comparison; document quality control. | SOC 2, SOC 3, AES-256 encryption |

Lexis+ AI | Legal research, case law analysis. | Legal-specific AI grounded in an extensive, proprietary database of case law and statutes. | ISO/IEC 27001:2022, NIS2, SOC 2 |

Spellbook | Contract drafting and automated review. | Automated clause suggestions; advanced redlining; playbook-based review integration. | SOC 2, TLS 1.2, AES-256 |

Ironclad | Contract Lifecycle Management (CLM). | High-volume contract management; approval workflows; comprehensive tracking dashboards. | SOC 2, GDPR, CCPA |

Document Management System (DMS) Integration Strategies

A critical insight regarding enterprise AI adoption is that standalone, siloed tools often fail to achieve widespread utilization due to "context switching" and workflow friction. To maximize efficacy, AI capabilities must be embedded directly into the environments where legal professionals inherently execute their daily work—primarily the Document Management System (DMS).

The integration battle is fiercely contested between industry leaders like iManage and NetDocuments. iManage has integrated AI natively into its platform, emphasizing its highly performant cloud architecture, offline caching, and a reported 99.98 percent uptime. iManage's deep integration with the Microsoft 365 Office suite allows lawyers to seamlessly leverage AI within Outlook or Word, executing complex tasks without breaking their established workflows. According to industry surveys, a staggering 91 percent of firms piloting generative AI are utilizing solutions tied to iManage, viewing the platform's robust governance, support for massive workspaces (up to 10,000 folders), and security protocols as a vital foundation for "AI Confidence".

Conversely, NetDocuments offers the ndMAX AI suite, which embeds generative AI capabilities within its cloud DMS. The ndMAX AI Legal Assistant allows users to interact with stored knowledge via natural language queries while strictly adhering to existing security permissions and governance controls. However, market feedback indicates challenges with NetDocuments regarding scale and performance degradation, noting technical limitations such as a 500-folder cap and a web-based integration model that sometimes forces awkward context-switching and "tab fatigue" for legal practitioners. Ultimately, the future of the DMS hinges on a revolutionary approach led by GenAI, transforming static repositories into dynamic engines of automated drafting and real-time knowledge retrieval.

Retrieval-Augmented Generation (RAG)

The technological backbone enabling secure, accurate enterprise AI is the Retrieval-Augmented Generation (RAG) architecture. Large Language Models (LLMs), such as GPT-4 and Llama 2, have transformed natural language processing, but their reliance on vast amounts of static, publicly available training data presents a profound challenge for corporate legal departments. When tasked with answering questions that involve proprietary, highly confidential internal data, standard LLMs are prone to producing answers that lack accuracy or relevance, simply because they have no direct access to the organization's private datasets.

RAG architecture provides an elegant and secure solution to this limitation. In a RAG deployment, the AI model does not rely solely on its internal parameters to formulate an answer. Instead, it dynamically integrates with external, domain-specific data sources. The RAG process is fundamentally broken down into critical phases: ingestion, retrieval, and generation.

During ingestion, internal corporate documents (PDFs, Excel files, legacy contracts) undergo text extraction and optical character recognition (OCR). This text is mathematically converted into "embeddings"—high-dimensional vector representations of the text's semantic meaning—and stored securely in a vector database (such as specialized instances of PostgreSQL).

When a legal professional submits a prompt or query, the system executes the retrieval phase. An algorithmic vector search scans the enterprise database to retrieve the most highly relevant, proprietary information based on semantic similarity. This retrieved data—the organization's confidential institutional knowledge—is then fed directly into the LLM alongside the original prompt. The LLM utilizes this specific, real-time context to generate an answer that is entirely grounded in the enterprise's private documents.

This methodology ensures that the AI produces tailored, precise results rather than generalized responses. Crucially for legal departments, RAG ensures that sensitive corporate data is never exposed to the public internet or used to train external models, effectively overcoming the limitations of traditional semantic search while ensuring unparalleled accuracy, speed, and data sovereignty. Advanced implementations utilize Vertical Slice Architecture, API Gateways, and strict database-per-service patterns to guarantee modularity and rigorous data isolation.

Financial Impact and ROI

As the initial novelty of generative AI wanes, corporate boards and legal leaders are demanding empirical, irrefutable evidence of its financial and operational impact. The period encompassing 2025 and 2026 is widely recognized as the era of "AI value," where the focus has definitively shifted from theoretical experimentation to demonstrating measurable ROI.

Productivity Metrics and Quantifiable Time Savings

The most immediate and easily quantifiable metric of AI success in legal departments is time preservation. Studies indicate that over two-thirds of organizations report measurable benefits within the first three months of implementation, with more than a third seeing returns within the very first month. Substantial productivity gaps are emerging between AI adopters and non-adopters. In corporate environments, "power users" of generative AI tools report saving an estimated 28.3 hours per month, compared to just 11.8 hours for standard users. Within law firms, these power users save up to 36.9 hours monthly.

This massive reduction in time spent on low-level, repetitive tasks directly correlates to increased operational capacity. Legal professionals express high optimism regarding AI's potential to streamline workflows, allowing them to shift their focus from rote administrative work to strategic foresight, complex judgment, and proactive risk mitigation. By freeing human bandwidth, corporate legal teams can handle a significantly higher volume of matters without proportional increases in headcount, thereby improving vendor relationships and exerting tighter control over departmental budgets. Over 81 percent of Chief Legal Officers report that GenAI accelerates legal work and fundamentally shifts the perception of the legal department from a pure "cost center" to a strategic business enabler.

Disrupting the Outside Counsel Dynamic

A profound secondary effect of GenAI's value realization is its disruptive impact on the traditional relationship between corporate legal departments and their outside counsel. Historically, routine legal work—such as foundational research, initial document review, and basic drafting—was outsourced to law firms and billed incrementally by the hour. Armed with the capabilities of generative AI, in-house teams are actively seeking to repatriate this work.

Survey data reveals a massive strategic pivot: 78 percent of in-house professionals see significant opportunities to bring drafting in-house using GenAI. This is closely followed by contract management (71 percent), legal research (62 percent), process automation (55 percent), and eDiscovery operations (29 percent).

Legal Tasks Identified for In-House Repatriation via GenAI | Percentage of Corporate Counsel Recognizing Opportunity |

Document Drafting | 78% |

Contract Management | 71% |

Legal Research | 62% |

Process Automation | 55% |

eDiscovery and Litigation Support | 29% |

M&A Support / Due Diligence | 29% |

Risk Analysis | 21% |

This dynamic is exerting immense, structural pressure on the traditional billable hour. Nearly a quarter (24 percent) of legal professionals report that they will "very likely" leverage AI-driven efficiencies to push for a complete restructuring or abandonment of the billable hour model. Corporate counsel now expect their law firms to utilize cutting-edge technology to drive down costs, with 43 percent believing GenAI will directly help them identify specific cost-saving opportunities regarding outside counsel services, and 42 percent utilizing AI to improve the analysis of law firm billing patterns for discrepancies.

Industry Validation: High-Stakes Deployments

The theoretical benefits and ROI models of generative AI are best validated through its application in highly regulated, data-intensive industries. Financial services and pharmaceutical companies have pioneered the enterprise-scale deployment of legal and compliance AI, utilizing the technology to manage existential regulatory risks and accelerate critical operational timelines.

Financial Services: Compliance and Massive Operational Scale

The financial sector faces unparalleled regulatory scrutiny, making legal compliance, contract management, and anti-money laundering (AML) operations a massive corporate expense. Leading institutions have deployed AI to process astronomical volumes of documentation with a degree of accuracy and speed fundamentally unattainable by human reviewers.

JPMorgan Chase's implementation of the COIN (Contract Intelligence) system stands as an early, benchmark achievement for automated legal operations. By utilizing advanced AI to extract data and analyze highly complex commercial credit agreements, the institution reduced tasks that previously consumed 360,000 annual hours of lawyer time to a matter of mere seconds. The COIN system processes over 12,000 agreements yearly, extracting more than 150 distinct contract attributes with a precision rate that exceeds that of human attorneys, resulting in millions of dollars in direct operational savings. This allowed JPMorgan to free up its legal staff for higher-value strategic work while strictly maintaining regulatory compliance standards.

Similarly, Goldman Sachs has aggressively integrated generative AI to automate intensive document analysis and internal coding procedures, reporting significant productivity boosts across its legal and compliance divisions. HSBC has redefined anti-money laundering and dynamic risk assessment by replacing traditional, rigid rules-based systems with dynamic AI frameworks capable of analyzing massive, unstructured datasets for financial crime. Bank of America's deployment of Erica, while functioning as a virtual assistant, demonstrates the enterprise scale achievable with tightly controlled AI models, managing hundreds of millions of interactions and significantly reducing IT and internal support burdens. The broader adoption trend in finance is staggering; nearly 98 percent of banks currently use or plan to use generative AI, relying heavily on cloud-based enterprise services like Microsoft Azure OpenAI to ensure robust security architectures. Furthermore, BNY Mellon's deployment of NVIDIA DGX SuperPOD infrastructure supports hundreds of identified AI opportunities, signaling a fundamental transformation of wealth management and banking operations.

Pharmaceuticals: Accelerating Innovation and IP Management

In the pharmaceutical industry, the intersection of legal operations, intellectual property (IP) management, and research and development (R&D) is critical to corporate survival. Companies like Pfizer, Novartis, GlaxoSmithKline (GSK), and MilliporeSigma are leveraging AI at an enterprise scale to manage the massive data loads associated with drug discovery, patent protection, and regulatory compliance.

Pfizer, through its Pfizer-Amazon Collaboration Team (PACT) initiative, utilizes AWS generative AI and machine learning capabilities to optimize the digital drug development pipeline. By applying these technologies to analyze laboratory data and clinical trial documentation, Pfizer saves its scientists and regulatory compliance teams up to 16,000 hours of search time annually, while simultaneously reducing infrastructure costs by 55 percent. Furthermore, Pfizer's implementation of its generative AI platform, Vox, represents a comprehensive integration of AI into pharmaceutical operations, enabling rapid knowledge retrieval across vast internal datasets using AWS cloud services.

Novartis has established over 100 dedicated AI use cases across its enterprise, aiming to compress the timeline for bringing medicines to patients and reducing operational costs by billions of dollars. This includes optimizing business processes, commercial activities, and the drafting and analysis of complex legal agreements associated with clinical trials and biomedical research. GSK has heavily invested in digital twin technologies and machine learning platforms, partnering with Siemens and Atos to utilize AI in maintaining stringent regulatory requirements and process controls in vaccine manufacturing environments. MilliporeSigma deployed its AIDDISON platform to integrate generative AI and ML into drug design and synthesis. In these highly regulated sectors, the ability of specialized legal AI tools to spot missing clauses, misaligned terms, and liability gaps is crucial, as compliance failures carry existential organizational and financial risks.

Risk Management and Governance

While the operational benefits are immense, the deployment of generative AI introduces profound, systemic risks to data privacy, confidentiality, and the foundational legal concept of attorney-client privilege. The fundamental architecture of public, consumer-grade LLMs—which routinely ingest user inputs to train future iterations of their models—is inherently incompatible with the fiduciary duties of legal practitioners.

The Landmark Implications of United States v. Heppner

The strict legal parameters governing AI usage were starkly defined by the U.S. District Court for the Southern District of New York in the highly consequential recent case of United States v. Heppner. In this landmark ruling, a criminal defendant facing federal fraud charges utilized Anthropic's generative AI platform, Claude, to process information gleaned from his attorneys and to draft documents outlining potential defense strategies. Operating without direct instruction from his counsel, the defendant shared the AI's outputs with his lawyers, which subsequently influenced their strategic approach. The defendant then attempted to assert attorney-client privilege and work-product protection over the digital exchanges with the AI.

Judge Jed S. Rakoff ruled unequivocally that the AI-generated content was not protected by either legal doctrine. The court's reasoning hinged heavily on the expectation of confidentiality. Because the defendant used a publicly available, third-party AI platform whose privacy policy explicitly stated that user inputs could be retained, used for model training, and potentially disclosed to third parties (including government authorities), the court determined that the user forfeited any reasonable expectation of privacy.

Furthermore, the court emphasized that transmitting the AI-generated content to a lawyer after the fact did not retroactively shield the documents with privilege. The AI platform did not constitute an agent of the attorney, nor were the materials created at the explicit direction of counsel to facilitate the provision of legal advice. The Heppner ruling serves as a vital cautionary tale for corporate legal departments: utilizing consumer-grade AI tools that lack strict, contractual data isolation inevitably destroys attorney-client privilege and exposes highly sensitive corporate strategies to legal discovery.

The Distinction Between Public and Bespoke Systems

To preserve privilege and confidentiality, corporate legal teams must strictly forbid the use of "shadow AI"—the unauthorized use of public AI tools by employees—through stringent access governance and logging protocols. Instead, organizations must deploy bespoke or enterprise-tier AI systems.

Under English law, Legal Advice Privilege (LAP) and Litigation Privilege (LP) protect communications meant for legal advice or litigation, but these protections require absolute confidentiality. Public systems (like the free version of ChatGPT) that process and store inputs destroy this confidentiality. Conversely, bespoke enterprise systems feature zero data retention policies, guaranteeing that prompts and uploaded documents are entirely isolated, are never used to train the vendor's underlying foundational models, and are protected by robust encryption standards. When AI is utilized under direct attorney supervision, within secure, private instances featuring strict access controls and data masking, traditional privilege arguments remain highly viable.

The NIST AI Risk Management Framework

In the United States, the National Institute of Standards and Technology (NIST) AI Risk Management Framework (RMF) has emerged as the preeminent standard for AI governance. Released in early 2023, the NIST AI RMF is a voluntary, highly flexible guide designed to help organizations identify, assess, manage, and monitor risks across the entire lifecycle of an AI system.

The framework is structurally organized around four core functions: Govern, Map, Measure, and Manage, encompassing 19 categories and 72 subcategories of granular risk mitigation. It heavily emphasizes the cultivation of AI systems that are transparent, fair, accountable, and robust against bias. While not explicitly legally binding, the NIST AI RMF is widely treated by federal agencies (such as the CDC), state legislatures (such as Montana's "Right to Compute" law), and corporate boards as the ultimate standard of care. Aligning corporate AI policies with the NIST framework significantly reduces reputational and legal liability, providing a structured, documented defense should an AI-assisted decision be challenged in court or by regulatory bodies. Furthermore, federal executives are pushing for the preemption of burdensome state AI laws in favor of a national standard aligned with NIST principles.

NIST AI RMF Core Function | Objective within the Enterprise AI Lifecycle |

Govern | Cultivates a culture of risk management; establishes policies, accountability, and organizational structures for AI oversight. |

Map | Identifies context, categorizes AI systems, and assesses potential impacts and risks associated with specific deployments. |

Measure | Employs quantitative and qualitative techniques to analyze, assess, and benchmark AI risks and system trustworthiness. |

Manage | Prioritizes risks and implements actionable strategies to maximize benefits while minimizing negative impacts and biases. |

ISO/IEC 42001 Certification and Global Compliance

On the global stage, ISO/IEC 42001 stands as the first internationally recognized certifiable standard for Artificial Intelligence Management Systems (AIMS). For multinational corporate legal departments navigating disparate global regulations—most notably the stringent requirements of the EU AI Act—ISO/IEC 42001 provides a holistic, unified approach to AI risk management.

Achieving ISO/IEC 42001 certification requires organizations to undergo a rigorous, multi-phased third-party auditing process.

ISO/IEC 42001 Audit Phase | Description of Assessment Requirements |

Scope Definition | Identifying specific AI systems, services, sites, and their relevant legal contexts. |

Risk Assessment | Evaluating technical, ethical, and legal risks and defining comprehensive mitigation controls. |

Documentation Review | Assessing internal controls and ensuring strict alignment with ISO 42001 documentation standards. |

Operational Audit | Confirming physical/digital implementation, stakeholder roles, and overall system effectiveness. |

Post-Audit Measures | Applying and verifying any required corrective actions identified during the audit. |

Certification Issuance | Valid for three years, requiring annual surveillance and full re-certification in year three. |

The standard demands the definition of clear organizational contexts, unwavering leadership commitment to AI governance, comprehensive system impact assessments, and the implementation of transparent documentation protocols ensuring systems are explainable and free from bias. Certification proves to regulators, clients, and internal stakeholders that the legal department has implemented stringent controls over data governance. By demanding that external legal tech vendors also maintain ISO/IEC 42001 certification, corporate law departments effectively outsource a significant portion of their compliance burden, ensuring that the entire technology supply chain adheres to the highest ethical and operational standards.

The Human-in-the-Loop (HITL) Imperative and Ethical Supervision

Beyond structural frameworks and certifications, the most critical operational safeguard in legal AI deployment is the strict enforcement of the Human-in-the-Loop (HITL) principle. Legal professionals are inextricably bound by strict ethical duties of competence, communication, and supervision. Generative AI, regardless of its mathematical sophistication, cannot independently guarantee compliance with these professional standards or accurately navigate the fluid ambiguities of jurisprudence.

Unchecked reliance on AI outputs carries the severe risk of embedding factual inaccuracies, systemic biases, or procedural errors into legal workflows. As evidenced by highly publicized instances of practitioners submitting fabricated case law to courts, human review acts as the ultimate safeguard. Corporate policies must explicitly mandate robust human oversight, aligning with Department of Labor guidelines that encourage transparency and independent audits of AI systems impacting employment decisions.

Best practices for HITL output verification require attorneys to execute a rigorous sequence of checks before finalizing any AI-generated legal content. This involves confirming the tool guarantees Zero Data Retention, manually cross-checking all citations and sources, analyzing the quality of the algorithmic reasoning, confirming the correct application of jurisdictional rules, actively screening for bias or mischaracterization, and mandating a final human sign-off.

Integrating HITL is not merely a post-generation review step; it must be embedded throughout the entire AI lifecycle. This includes involving domain experts in curating the initial training data, running edge-case simulations during model testing to detect failure modes, and maintaining comprehensive audit trails and real-time dashboards that document manual overrides during production use. As highlighted by judicial expectations, the legal profession can only safely capture the speed and scalability of AI if it vehemently preserves human nuance, training, and professional accountability.

Investment Ask: Project Discovery Phase and Budget Forecast

To capitalize on this transformative technology and move from theoretical planning to enterprise value realization, we are requesting executive approval and funding for a formal Project Discovery Phase.

This initial phase is critical for mitigating implementation risk. The discovery process will involve a comprehensive task audit across the legal department to identify workflows with the highest AI suitability and establish our foundational prompt engineering methodologies. We will utilize this phase to evaluate bespoke platforms, finalize our Document Management System (DMS) integration strategy, and define strict data governance protocols.

Furthermore, this phase will allow us to build a precise Total Cost of Ownership (TCO) model. Current industry benchmarks indicate that developing and integrating a custom Generative AI application requires a capital expenditure ranging from $150,000 to $500,000, while deploying a comprehensive enterprise AI platform can scale from $400,000 to over $1 million. In addition to upfront capital, our TCO model must account for ongoing model maintenance, security patching, and fine-tuning, which averages $15,000 to $25,000 annually to prevent algorithmic degradation.

Funding this Project Discovery Phase will empower our team to finalize the technical roadmap, execute vendor selection, and deliver a definitive ROI timeline to the board, ensuring our legal department evolves into a proactive, data-driven engine of business enablement.

View more articles

Learn actionable strategies, proven workflows, and tips from experts to help your product thrive.