Perspectives

The Best Prompt Guide for AI Agents Is a Movie

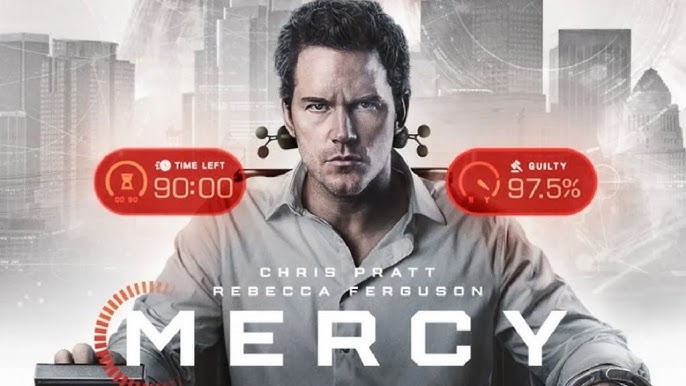

A detective wakes up accused of murder. An AI judge gives him 90 minutes to prove innocence. His guilt score: 97.5%. Miss the threshold, and the sentence is death. It's fiction — but the architecture is closer to production than most teams realize.

What Mercy Reveals About Agentic Architecture, Algorithmic Scoring, and the Thresholds We Don't Talk About

Mercy, released in January 2026 by Amazon MGM Studios, isn't a great film. Critics were harsh — 24% on Rotten Tomatoes, a consensus calling it "tedious enough to make you cry uncle." The plot has holes. The logic strains credulity.

But as a product manager watching it, I couldn't stop taking notes.

Not because of the story. Because of the system. The AI judge in Mercy — Judge Maddox, played with unsettling precision by Rebecca Ferguson — is the most concrete demonstration of agentic AI architecture I've seen on screen. And the uncomfortable questions the film accidentally raises are the same ones most product teams are actively avoiding.

The Setup

The film is set in 2029 Los Angeles. Crime is out of control. In response, the Mercy Capital Court has been established — a judicial system powered entirely by artificial intelligence. No human judges. No jury. No lawyers. Just a defendant strapped to a chair, facing an AI avatar on a screen.

The rules: if the algorithm calculates a conviction probability above a threshold, the trial begins. The defendant gets 90 minutes and access to any digital evidence they can find to lower their guilt score. Fail to cross the threshold, and the sentence — execution via sonic blast — is carried out immediately. No appeals.

Detective Chris Raven (Chris Pratt), the cop who championed the creation of this court, wakes up hungover, shackled, and accused of murdering his wife. His guilt score starts at 97.5%. The clock begins.

It's pulpy science fiction. But the systems underneath it are surprisingly instructive.

What the Film Demonstrates About Agentic Architecture

Strip away the thriller mechanics, and Mercy is a walkthrough of agentic AI patterns that most product teams are still figuring out.

Multi-Agent Flow

The investigation in Mercy isn't one prompt producing one answer. It's multiple parallel investigation threads — surveillance footage, phone records, financial transactions, relationship histories, physical evidence — all converging under a hard deadline. Judge Maddox orchestrates these threads, pulling from different data sources, correlating findings, and updating the guilt score in real time.

This is multi-agent orchestration. In production AI systems, the same pattern applies: you don't solve complex problems with a single model call. You decompose the task, route sub-problems to specialized agents, aggregate results, and resolve conflicts between findings. The challenge — as the film illustrates — is coordination under constraint. Time pressure, incomplete data, and contradictory evidence don't disappear just because you have more agents running.

Multimodal Search and Reasoning

Judge Maddox doesn't just process text. The system pulls video from doorbell cameras, body cams, and surveillance drones. It analyzes audio from phone calls. It processes images from crime scenes. It cross-references digital records — browser history, financial transactions, location data.

This is the real enterprise dataset. Documents are the easy part. The hard part is building systems that can reason across modalities — connecting what someone said on a phone call to where they were on a map to what a camera captured at that location. The film presents this as seamless. In reality, multimodal reasoning remains one of the most technically demanding capabilities in production AI.

Computer-Use AI

The AI in Mercy isn't a model in a sandbox. It's embedded in infrastructure — pulling evidence from government databases, running facial recognition, initiating SWAT deployments, enforcing sentences. It doesn't just analyze. It acts. It controls.

This is the trajectory of agentic AI in enterprise: from advisory systems that recommend to autonomous systems that execute. The distinction matters enormously for product design. When an AI system can only suggest, the cost of being wrong is a bad recommendation. When it can act — approve a loan, flag a transaction, deny a claim, execute a sentence — the cost of being wrong is material harm.

The Human-Machine Boundary

Here's what makes the film interesting from a product perspective, despite its flaws.

The AI had all the data. Total surveillance coverage, every digital record, every camera feed. But it still needed a human — the detective — to direct the investigation in ways the system couldn't anticipate.

As Pratt's character tells Judge Maddox: the facts aren't where the investigation ends. They're where it starts.

The machine had the map. The human chose what mattered. The detective made intuitive leaps — questioning motives the algorithm couldn't model, exploring relationships the data didn't surface, following hunches that contradicted the probability score.

This is the core design challenge in every agentic AI product: where does the machine's jurisdiction end and the human's begin? The film's answer — imperfect, but directionally right — is that AI excels at processing, correlating, and scoring, but fails at imagination, context, and judgment about what matters. Those are human functions. Products that eliminate the human from that loop don't become more efficient. They become more brittle.

The Uncomfortable Questions

Mercy doesn't interrogate its own premises deeply enough. Multiple critics noted this — the film presents itself as a critique of algorithmic justice while implicitly endorsing total surveillance. But the questions it surfaces, even accidentally, are the ones product teams need to answer.

If Guilt Is a Probability, Who Picks the Cutoff?

The Mercy Court triggers a trial when the conviction probability crosses a threshold. In the film, it's somewhere around 80%. The execution happens if the defendant can't lower the score enough. The threshold is the entire moral architecture of the system — and no one in the film questions who set it or why.

This is not science fiction. Credit scoring, fraud detection, hiring algorithms, and insurance underwriting all operate on probability thresholds. A score above X means denial, investigation, or rejection. The threshold is a moral choice wearing math.

Research makes this concrete. The Apple Card controversy in 2019 — where the algorithm offered a man twenty times the credit limit of his wife despite her higher credit score — led to a New York Department of Financial Services investigation. The algorithm was technically compliant with fair lending laws, but failed the transparency test. The CFPB later fined Apple $25 million and Goldman Sachs $45 million for related failures.

The Netherlands' SyRI system, designed to detect welfare fraud through algorithmic scoring, was struck down by a Dutch court in 2020. The court found the system lacked transparency, its algorithm was completely opaque, and the risk of discrimination was unacceptable. After years of operation and millions invested, the system had failed to detect a single case of fraud.

Every AI product that scores people operates on a threshold someone chose. The question is whether that choice was made deliberately, with accountability, or whether it was inherited from a default setting that no one revisited.

Would You Accept Total Surveillance for More Efficient Justice?

In Mercy, the detective's defense depends entirely on ubiquitous surveillance — Ring cameras, body cams, phone records, drone footage, social media. The system works because everything is recorded. One critic observed that the film — produced by Amazon MGM Studios — prominently features Amazon's Ring cameras, and noted that Ring has partnered with AI companies that share footage with law enforcement agencies.

The film never questions whether this surveillance architecture should exist. It only asks whether it's being used correctly.

For product managers, this maps to a real design tension: every AI system that improves accuracy by consuming more personal data is implicitly asking users to accept expanded surveillance in exchange for better outcomes. The question isn't whether the system is more accurate with more data. It almost certainly is. The question is whether the accuracy gain justifies the privacy cost — and who makes that determination.

When We Remove Human Bias, Do We Also Remove Human Mercy?

The film's title is its thesis. The AI judge operates without emotion, without prejudice, without leniency. It processes facts and produces scores. That's the selling point — and the horror.

Amazon's discontinued AI recruiting tool, which systematically downgraded resumes from women's colleges because its training data reflected historical hiring patterns, demonstrated that removing human bias doesn't mean removing bias. It means encoding it differently — in ways that are harder to see and harder to challenge. Academic research confirms that AI models trained on historical data can perpetuate existing inequalities across gender, race, and disability, with the "black box" nature of many algorithms making it difficult for affected individuals to understand or contest decisions.

The EU AI Act, now in force, requires businesses to document AI decision-making processes and implement bias detection mechanisms for high-risk applications. McKinsey reports that 58% of credit risk executives cite model transparency and fairness as significant challenges. More than two-thirds of technology leaders surveyed by OneTrust say governance capabilities consistently lag behind AI deployment speed.

The mercy in Mercy isn't the court system. It's the human capacity for judgment, context, and compassion that the system was designed to eliminate. The film argues — perhaps unintentionally — that this capacity isn't a bug. It's a feature.

The Question Your Product Needs to Answer

The film ends with a point that lands harder than the filmmakers probably intended: the detective, who built the system, nearly died in it. The architect of algorithmic justice became its subject.

This is the product management version of the same scenario, scaled down from execution to everyday consequences: if you wouldn't trust an AI judge with your life, why are we comfortable letting AI score people at work — in hiring, performance reviews, credit decisions, fraud detection, insurance underwriting — with far less transparency than the fictional Mercy Court provides?

In the film, the defendant at least gets 90 minutes, access to all evidence, and a real-time view of the score. In most real-world AI scoring systems, people don't know they're being scored, can't see the evidence, don't understand the algorithm, and have no meaningful appeal.

The question isn't whether your product uses AI scoring. Most products do or will. The question is: where is the mercy threshold in your product?

That means defining, explicitly:

What's the confidence level required before the system acts? Not the default. Not what the model outputs. The threshold your team deliberately chose, with documented reasoning for why that number and not another.

What does the human see when the system makes a decision? If the answer is "nothing" or "a score without explanation," you've built the system Mercy warns about.

What's the appeal path? When the system gets it wrong — and it will — can the affected person challenge the decision in a way that actually changes the outcome?

Who is accountable? Not the model. Not the vendor. A human being who approved the threshold, reviewed the edge cases, and owns the consequences.

What gets measured for fairness — and how often? Regular algorithmic audits, not annual compliance reviews. Continuous monitoring for disparate outcomes across demographic groups. Published results, not internal memos.

The Machine Had the Map. The Human Chose What Mattered.

Mercy isn't a good movie. But it's a useful provocation.

It demonstrates, in 100 minutes of admittedly clunky cinema, the agentic architecture patterns that product teams are building toward — multi-agent orchestration, multimodal reasoning, computer-use AI with real-world authority. And it exposes, more honestly than most industry conferences, the ethical territory that comes with those capabilities.

The AI in the film had total information and zero judgment. It could correlate everything and understand nothing. It could score guilt to two decimal places and couldn't recognize innocence when it was staring at the data.

That's not a flaw in the movie's logic. That's a design brief for every product team building AI systems that score, classify, or decide on behalf of humans.

Build the system. Define the threshold. But make sure there's room for mercy — because a probability score is not a verdict, no matter how many decimal places it carries.

View more articles

Learn actionable strategies, proven workflows, and tips from experts to help your product thrive.